“A mind-bending, bio-digital fable that pulses with poetic strangeness. Oram’s Brain Fruit is daring, disorienting, and deeply human.“

Presented in a limited-edition only, 500 numbered copies, and a design-forward format, this is not just a story—it’s an experience.

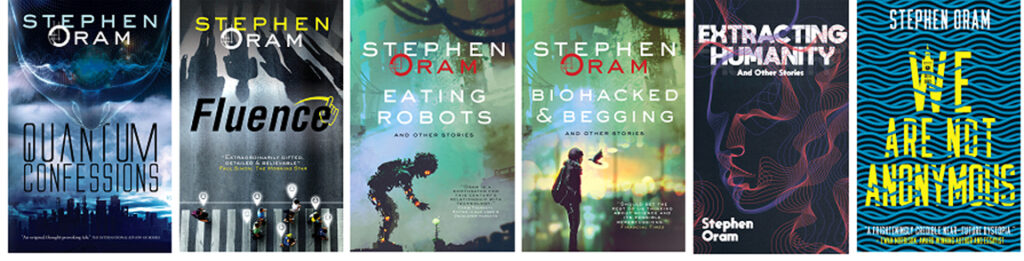

Speculative Fiction

“A soothsayer for this century’s relationship with technology.”

Chris Thornett, Editor Linux User & Developer Magazine.

Applied Science Fiction

“Should set the rest of us thinking about science and its possible repercussions.”

Chris Nuttall, The Financial Times.

Collaborations include: King’s College London; Cybersalon digital think tank; Coventry University and the Defence Science and Technology Lab; Anhalt University of Applied Sciences; European Human Brain Project and Bristol Robotics Lab; TwinsUK; and Furtherfield (de)centre for art and tech.

Speaking

Stephen has given talks at ‘festivals of thought’, to corporate, academic and general public audiences as well as contributing to literary festival and future focused panels.

Among these are: International Robotics Showcase (keynote); Royal Society for Arts (RSA); Barbican FutureFest Late; Goethe-Institut Indonesien; Leverhulme Centre for the Future of Intelligence; Royal Anthropological Institute; World Science Fiction Convention (Worldcon); Central Saint Martins’ London Laser Lab; and the Science Museum as part of the first Human Brain Project Innovation Forum.

He is always open and interested in all types of speaking events.

The playlist: interviews, talks & readings

Quotes…

“With Bradbury’s clear-sightedness and Pangborn’s wit, he pulls ways to live out from under modernity’s ‘cacophony of crap’.”

Simon Ings, Arts Editor, New Scientist.

“Combines the sharp edginess of a JG Ballard with the vaulting inventiveness of a modernist Ovid.”

Paul Simon, The Morning Star

“This is science fiction doing its day job, and doing it well.”

Ken MacLeod, author of Intrusion

“Manages to maintain humanity and humour, even when he descends into post-human worlds where the boundaries between human and non-human are queasily blurred.”

Chris Beckett, author of the Dark Eden Trilogy

“Oram’s writing places you at the human heart of all things technological.”

Dr. Kate Devlin, reader in artificial intelligence and society at King’s College London and author of Turned On: Science, Sex and Robots.

As always this author manages to highlight human intricacies in a profound and poetical way, so that anyone can’t but fall in love with his words.

Chiara, Bookstagrammer

“His writing is emotional without evoking drama, down to earth without being mundane and almost unsettling in its pragmatic approach to ethics.”

Ksenia Shcherbino, BSFA Review