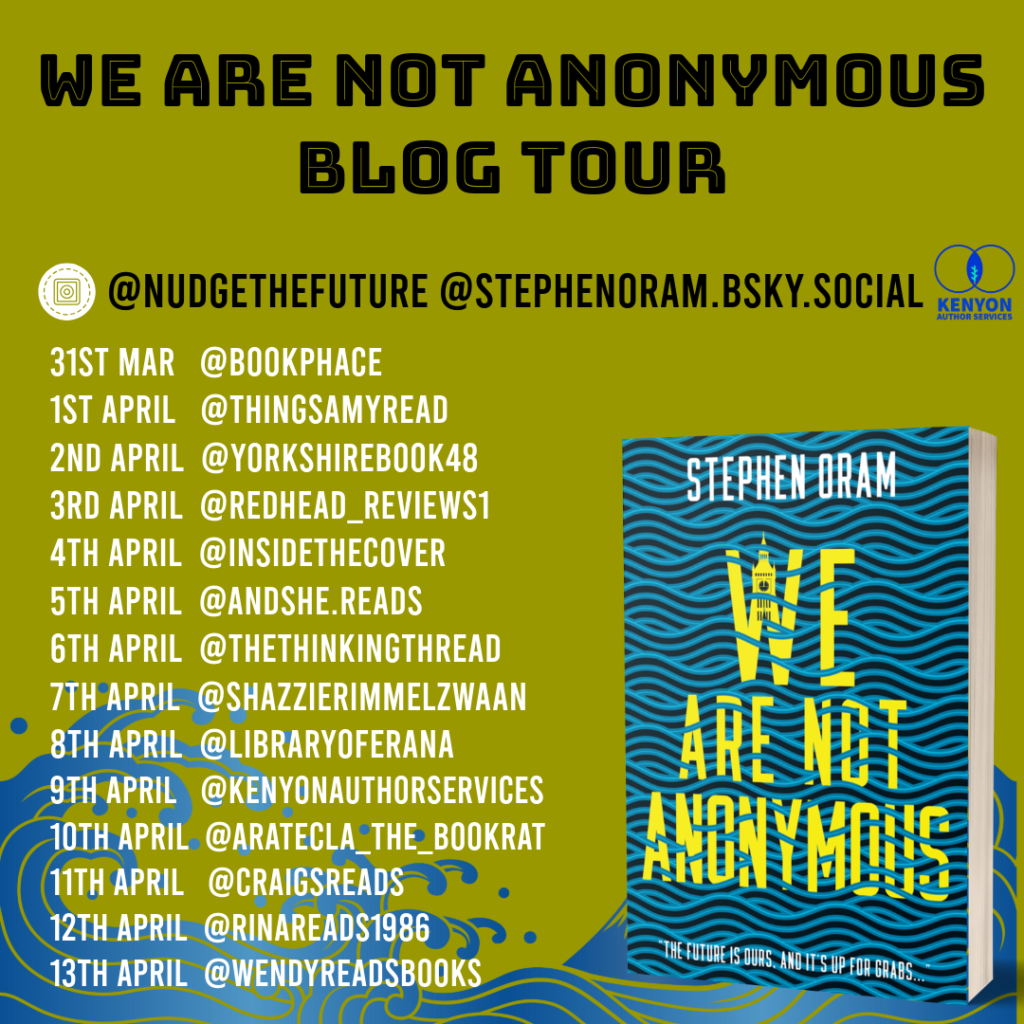

The first leg of the tour is organised by Kenyon Author Services.

(follow the tour and read the reviews)

The second leg of the tour is organised by Love Books Tours.

(follow the tour and read the reviews)

The first leg of the tour is organised by Kenyon Author Services.

(follow the tour and read the reviews)

The second leg of the tour is organised by Love Books Tours.

(follow the tour and read the reviews)

London and Bristol launches for We Are Not Anonymous announced

We ‘reveal’ the cover of forthcoming novel, “We Are Not Anonymous”

Dead Bots and Data Sets.

Who owns the deceased? Who knows the deceased? Who gets to simulate the deceased? Do we ever fully know someone? Can the ‘grief bot’ companies really replicate? Do they have the right data? Do they have the rights to the data?

Here are 10 minutes of ‘starter’ thoughts on the subject.

https://open.substack.com/pub/nudgethefuture/p/our-data-our-future-a-short-podcast-2d7

What a great 90 minutes this was – a deep dive into the meaning(s) behind Brain Fruit by Nikki and Tanya, two wonderfully insightful podcasters that are Wine On An Empty Stomach.

What is probably my most experimental piece to be published is out today.

“Same Happens at the Everything Time” is in Verschränkung, the Spring/Summer issue of Sein Und Werden.

[Editor’s note: the author specified the use of the parallel line symbols in the subtitle and the morse code section headings, because they both contribute additional meaning to the piece. In addition, the author used Excel to randomise the sequence of the sentences in each section three times before picking his favourite, in a kind of digital cut-up technique.]